Study of network load losses

Accueil » Expertise » Industrial Process » Study of network load losses

EOLIOS calculates and optimizes the pressure drops of your networks

- Design, analysis, optimization

- Speed evolution

- Stationary or transient study

- Optimization of the installation

- Laminar or turbulent flow

- Sizing of suction systems

- International projects

- Accompaniment

- A passionate team

Continue browsing :

Our latest news:

Our projects :

Our fields of intervention :

Most of the conveying systems in industrial plants have various singularities that cause significant changes in the flow. Their influence can lead to flow changes such as phase separation, instabilities and changes in the flow regime.

In this context, it becomes complex to apprehend the head losses in very particular networks.

EOLIOS is specialized in multi-scale aeraulic studies. This expertise allows us to bring you a thorough analysis of the actions of a gas or a liquid in your networks in order to provide indications for the design of your various systems.

Pressure loss study

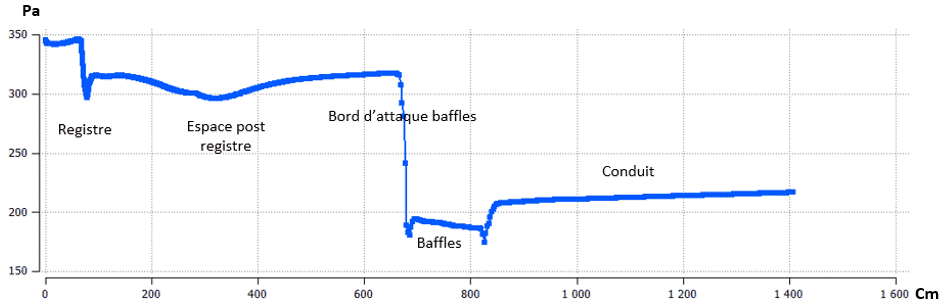

In fluid mechanics, , the pressure drop of a liquid or a gas due to the different friction against the walls of a tube or a sheath is called ” pressure drop “. This friction generates a dissipation of the mechanical energy of the fluid. There are two types of pressure drops :

- Linear or regular losses : Energy loss due to friction on the walls of a conduit or a pipe whose roughness may vary.

- Singular losses : Energy loss due to the various singularities of the network such as section changes, elbows, inlets or outlets…

The origin of pressure drops

Loss of regular loads

The regular pressure losses are caused by friction on the walls of the network. The more viscous the fluid, the greater the friction. The viscosity of the fluid combined with the micro asperities of the network increases the friction of the fluid and consequently the dissipation of energy.

For a given liquid or gas, the pressure drops depend on two things:

- Pipe roughness: The materials used for the ducts or pipes have more or less roughness on their surface. This property of the material results in a regular pressure drop of varying magnitude.

- The type of flow There are different types of flows, such as laminar, transient or turbulent flows. The difference between these flows is reflected in the ratio of inertial forces to viscous forces.

Singular pressure loss

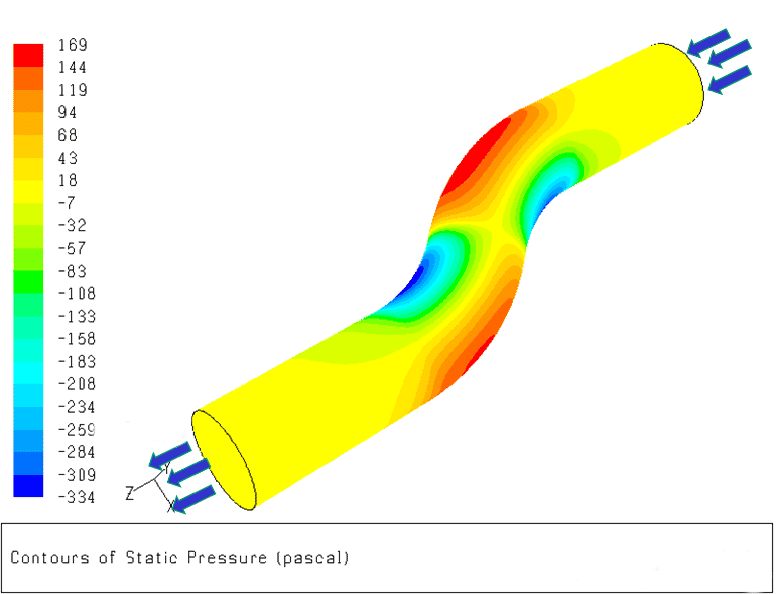

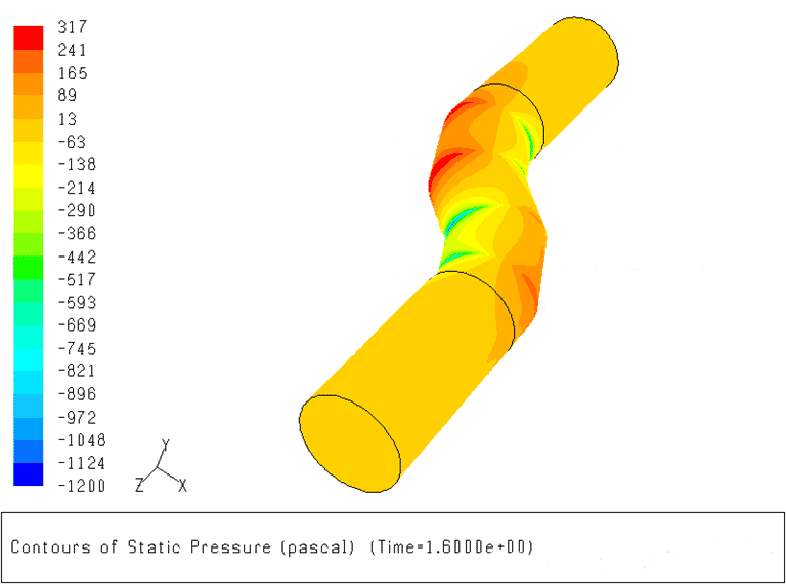

Regular pressure drops occur when a change in geometry takes place within the network. These geometry changes cause flow disturbances and can sometimes lead to vortex phenomena within the pipe itself.

Typically, regular pressure drops are changes in cross-section, bends changes of section, elbowsor systems attached to the network such as expansion boxes expansion boxes, heat exchangers, etc.

Their influences can lead to flow modifications such as phase separation, instabilities and changes in the flow regime. Singular pressure losses are in most cases the source of the major part of the pressure losses.

Installation optimization and design aids

In the industrial fluids sector, the pressure drop is a well known phenomenon that must be taken into account. EOLIOS accompanies you in your steps of calculation of loss of charge in order to answer your needs as well as possible. We are able to provide a precise calculation of the pressure drops of your systems and to guide you towards the optimization of your networks and your installation.

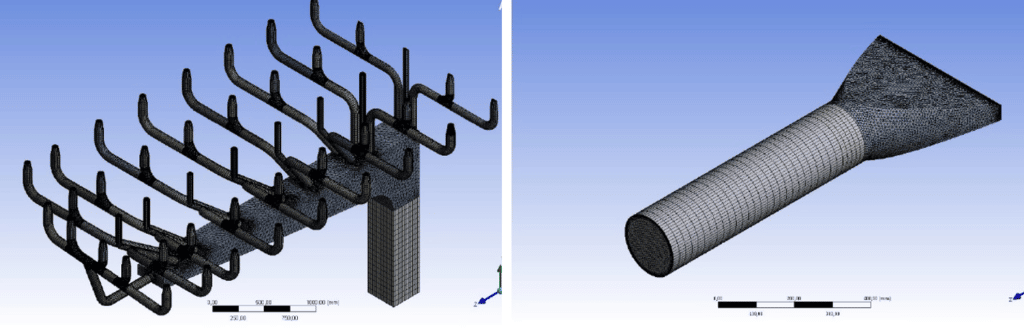

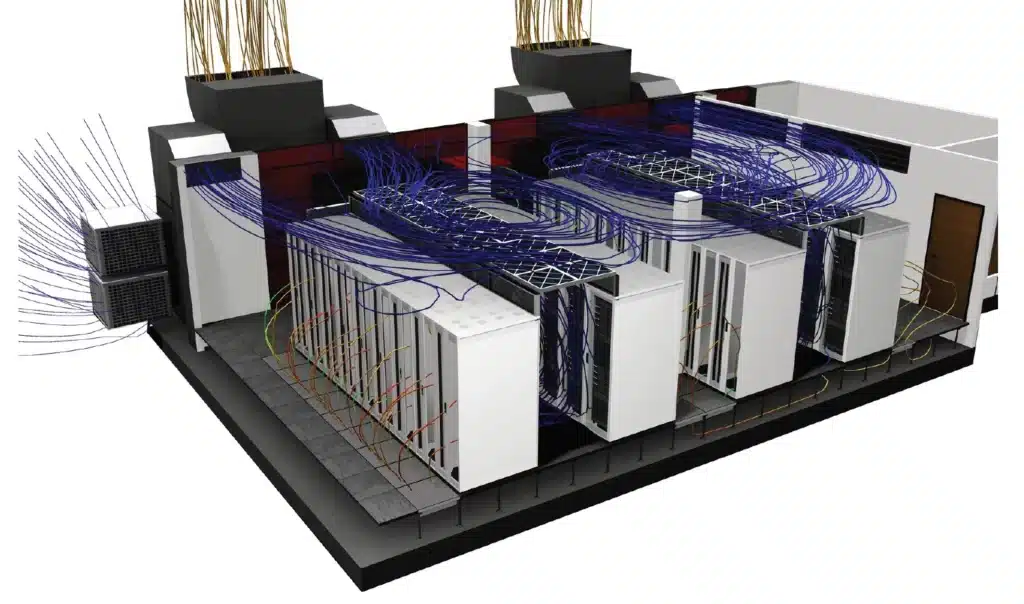

3D modeling & CFD simulation of your networks

The realization of a numerical twin as well as a CFD simulation of your networks is possible within the framework of a calculation of pressure loss. CFD simulation, whether stationary or transient, can be a valuable aid in capturing all the phenomena at play in your systems.

Understand how air conditioning works in a data center

With the increase in the amount of information and the degree of computerization of work processes, the question of the security of this information during the uninterrupted operation of servers is becoming more and more acute. A failure in this area can suspend all company activities and lead to serious losses. One of the main conditions for stable operation of servers is the maintenance of the optimal air temperature in the volume of server rooms, which is achieved by using special systems based on precision.

Operating a data center is energy intensive, and the cooling system often consumes as much (or more) energy as the computers it supports.

In this article, we will review some of the most commonly used data center cooling technologies, as well as new approaches to CFD simulation.

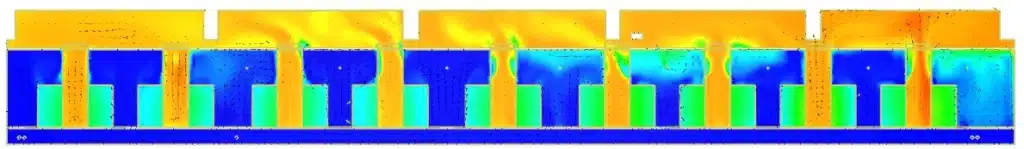

Cold aisle / hot aisle design

This is a data center rack layout, which uses alternating “cold aisle” and “hot aisle” rows.

In front of the racks are cold air diffusers (usually via grills) for the servers to draw in air, then hot aisles remove heat from behind the servers. The ventilation ducts are usually connected to a false ceiling taking the warm air from the “hot aisles” to be cooled, then the cooled air evacuated in the “cold aisles”, via a false floor or ducts (see loose for some designs).

Empty server racks should be filled with blanking panels to prevent overheating and reduce the amount of wasted cold air. Indeed, the vacuum created by the absence of servers can lead to parasitic air transfers given the pressure differences between hot and cold areas. This stray air movement is wasted energy.

Chilled water system

This technology is most commonly used in medium to large data centers.

The air in the data center is supplied by air handling systems, known as air treatment in the computer room (CRAH), and chilled water (supplied by an external cooling system) is used to cool the air temperature.

What is the difference between CRAC and CRAH units?

CRAC units

- Use a refrigerant

- Requires a compressor

CRAC units operate like home air conditioning units . They have a direct expansion system and compressors built right into the unit. They provide cooling by blowing air over a cooling exchanger filled with refrigerant. The refrigerant is kept cold by a compressor inside the unit. The excess heat is then expelled by a mixture of glycol, water or air. While most CRAC units typically provide only a constant volume and modulate only on/off operation, new models are being developed that allow for variations in airflow.

There are different ways to place CRAC units, but they are typically installedopposite the hot aisles of a data center. There, they release cooled air through the perforations in the raised floor (grids, or floor tile perforations), cooling the computer servers.

CRAH units

- Use chilled water

- Have a control valve

CRAH units operate like chilled water air handlers installed in most office buildings. They provide cooling by blowing air over a cooling exchanger filled with chilled water. Chilled water is usually supplied by “Water Chillers” – otherwise known as a chilled water plant. CRAH units can regulate fan speed to maintain a set static pressure, ensuring that humidity levels and temperature remain stable.

The production of chilled water can be carried out by direct expansion or by much more energy-efficient adiabatic cooling-type DRYs .

What is the optimal temperature for a data center?

Server rooms and data centers contain a mixture of hot and cold air – server fans expel hot air during operation, while air conditioning and other cooling systems bring in cool air to counteract any hot exhaust air. Maintaining the right balance between hot and cold air has always been critical to keeping data centers available. If a data center gets too hot, the equipment is at a higher risk of failure. This failure often results in downtime, data loss and lost revenue.

In the 2000s, the recommended temperature range for the data center was 20 to 24°C . This is the range that the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE) has recommended as optimal for maximum equipment availability and life. This range allowed for better utilization and provided enough buffer space in case the air conditioning failed .

Since 2005, new standards and better equipment have become available, as have improved tolerances for higher temperature ranges. ASHRAE has actually now recommended an acceptable operating temperature range of 18° to 27°C.

The rise in temperature at the server entrance also makes the use of the free cooling or free chilling (systems that use outside air to blow fresh air into the room or cool water in place of the chiller) well more interestingespecially in temperate regions such as France. Indeed, with temperature setpoints of 25° C in the room, instead of 15° C, the periods of the year during which free cooling can be used, without activating the air conditioning, are considerably longer. This generates significant energy savings and an improvement in PUE (Power Usage Effectiveness). The same goes for free chilling, which can be used more frequently during the year, to cool the water loops, the temperature set points now being set at 15 ° C instead of 7 ° C for water. .

What are the problems of a too high temperature set point in a data center?

Unfortunately, higher operating temperatures can reduce the response time in the event of a rapid temperature increase due to a cooling unit failure. A data center containing servers operating at higher temperatures is at risk of instant simultaneous hardware failures . Recent ASHRAE regulations emphasize the importance of proactive monitoring of environmental temperatures inside server rooms.

What happens if it gets too hot?

When the temperature inside the data center rises too high, the equipment can easily overheat. This can damage servers. Data could be lost , causing major problems for businesses that rely on data center services. That’s why all data centers must have cooling systems that can withstand a crisis or maintenance period.

What happens if the air conditioning systems break down?

Depending on the installed power density, the increase in air temperatures inside the server room can be extremely rapid. We generally observe during simulations of power failure, a rise in temperature of the order of 1°C per minute. This results in a significant risk of hardware degradation and data loss if the redundancy and security systems are not properly dimensioned. On the other hand, the restart time and full power activation of the compressors of the air conditioning systems is an issue for the most demanding halls. In order to delay the effects of temperature rise, there are inertia systems that store heat energy for a few minutes to smooth the temperature rise curve.

Why perform a CFD simulation of a data center?

CFD simulation provides information on the relationship between the operation of mechanical systems and changes in the thermal load of IT equipment. With this information, IT and site personnel can optimize airflow efficiency and maximize cooling capacity.